How to Digitally Enable Performance

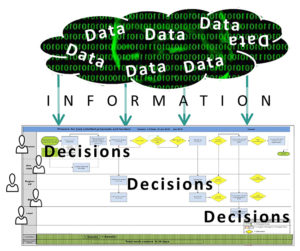

The promise of better performance via digitization and real-time access to information is best achieved if we begin with a process-centric view. With that, ask the question: What minimal information does each process require to make the best possible decisions? By so doing, we sort the wheat from the chaff and relay only the information required to speed decisions, enable agility and lift results.

Parse your data thoughtfully to make better decisions faster and get the most out of any process.

We’re impatient. In delivering products and services everybody wants to improve results. Yet, progress rarely matches expectations. To create an advantage, few things hold greater promise than digitization. We’re well equipped with computers, smart phones, tablets and touch screens… and lots of data. The cloud allows us to distribute huge quantities of information with ease. In spite of all this technology, we bog down when it comes to parsing critical bits of information needed to make the people-process interface work best.

What Does Digitally Enabled Change Mean to Performance?

Merging the concepts of “digitally enabled change” and “performance” can mean different things to different people. We’re seldom on the same page. The connection between digitization and performance is two-fold: First, it should enable better and faster decisions; and second, it should help compare results in order to propel best demonstrated practices. Such entitlements are pure gold for a large enterprise. Let’s take a closer look.

- Faster Decisions: If we collect, parse and distribute the right information to the appropriate stakeholders in an environment that’s equipped to use the information, better decisions up and down the value chain are possible. Then, lead times shrink. The ability to reduce lead time is an enterprise’s best indicator of competitiveness.

- Comparative Evaluation: Data allows us to compare results between procedures, processes and equipment across time and space. This allows us to identify performance trends and to evaluate and spread best practices. My friend and colleague Hans-Georg Scheibe with ROI* describes this quite well:

“In our projects, “digitally enabled change and performance“ often means to provide the right information to different stakeholders. E.g. to gather data from comparable machines all over the world, and to compare a dedicated machine with the others. There is always a best and a worst and when you ask why, you get a chance to improve. Or to gather best practices from different teams and to provide this knowledge in a manner that somebody else can use to solve problems directly, or faster or better. This requires connectivity to gather the data – to have a kind of intelligence to make information out of the data and then to turn information into knowledge.”

A Process-Centric Approach

Instead of starting with all the data available – both signal and noise – and pushing it into the process, we start by looking at the process itself and ask what minimum information (data) is required for people closest to the work to make the best decisions.

Why is worker inclusion so important? In practice, we’ve found that only the people doing the work can reliably tell you what information is essential to getting the job done right. Thus, engaging in front-end information conditioning is vital to actually reducing lead times, revealing improvement opportunities and paving the way for a better future-state work process.

The Decision Map

As its name implies, a Decision Map emphasizes the idea that faster and better decisions are the precursor of optimal processes. We start with a fairly traditional view so we can see the process flat on the wall – it could be a swim lane map, box and wire, value stream map, etc. Then we identify places where information is required to make decisions and take action. We pose simple enough queries that often prove to be thorny lines of inquiry such as:

| Who makes the decision and is this right? | There can be no room for ambiguity. |

| What information is really necessary? | Less is more. Extraneous information is inventory and inventory has a carrying cost. |

| Is the decision point in the right place in the value stream or could it be moved or consolidated? | When more than one point makes a decision, it’s an opportunity for no decision. |

| What’s the best way to collect and convey the information? | Critical point here. Some information is best collected and conveyed electronically. Some is better suited for human involvement. This must be sorted out deliberately. |

The maps show us different functions and accountabilities. You will find that some of the same data and information will be allocated to more than one location for different purposes. The point here is that these distributions of information are not a push of all information to be sorted out by the users, but rather they are done with a logical intent to provide only the information necessary to make the best decision at any given node.

Conclusions

For faster and better point-of-use decisions, information must be relevant to the user. Because of this we highlight data that is layered according to function and accountability. While daunting in an environment that identifies with “everybody can and perhaps should know everything”, a disciplined “need to know” approach makes whatever information is conveyed seem more valuable to the user because it is concise and actionable – it sorts out the wheat and the chaff is left behind. In the end, Digitization can enable change and performance in remarkable ways. But, the concept works best when we don’t just throw all kinds of data at people and process and hope for the best. Up front evaluation of the process with the people doing the work is the right way to start. It makes change stickier and reduces rework and frustration. Most of all, it gets to optimal performance much, much faster.

==========

Want to know more about how Kaufman Global helps clients manage change and improve performance? Contact us.

*ROI Management Consulting, AG is a German-headquartered, network partner of ours that specializes in operational performance.

Over the past few years, we’ve observed that clients want answers faster than ever before. And while it could be that “time is money,” it seems to us that it’s more related to the frenetic pace of, well, everything these days. Headlines and “apps” often don’t dig deep enough and the “Ready, Fire, Aim” approach has great potential for missteps.

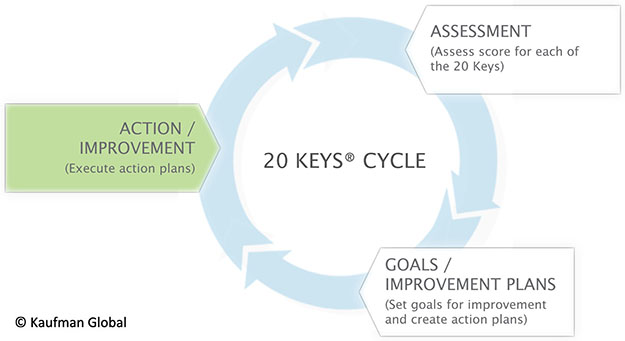

Over the past few years, we’ve observed that clients want answers faster than ever before. And while it could be that “time is money,” it seems to us that it’s more related to the frenetic pace of, well, everything these days. Headlines and “apps” often don’t dig deep enough and the “Ready, Fire, Aim” approach has great potential for missteps. As identified in the 20 Keys Cycle illustration above, the method drives a continuous cycle of improvement and builds on prior efforts. Typically repeated four times per year, location leadership or a designated representative works with the workgroup to assess their score for each of the 20 keys. This is an honest, direct exchange in which the they score each key against known criteria. Once the assessment is done, this same workgroup decides on which key(s) they should focus on improving. The objective is for them to increase their overall score by 10 points per year.

As identified in the 20 Keys Cycle illustration above, the method drives a continuous cycle of improvement and builds on prior efforts. Typically repeated four times per year, location leadership or a designated representative works with the workgroup to assess their score for each of the 20 keys. This is an honest, direct exchange in which the they score each key against known criteria. Once the assessment is done, this same workgroup decides on which key(s) they should focus on improving. The objective is for them to increase their overall score by 10 points per year.